ML + Unity3D

Unconventional Game Controller

2023

As part of a team of three, we combined machine learning with game engines in order to explore alternative modes of gaming using object and speech detection.

For this project, we wanted to investigate how ML models in object detection and speech recognition could give way to alternative modes of gaming by conceptualizing unconventional controllers.

What the research aims to do, as an interaction design problem, is to think of ways to break down the screen space and the physical environment so that we can bring the physical, analogue objects in our surroundings into the virtual world. In this way, the physical “real” world and virtual can co-exist and collaborate in tandem with each other to create unique and immersive experiences.

ML Application

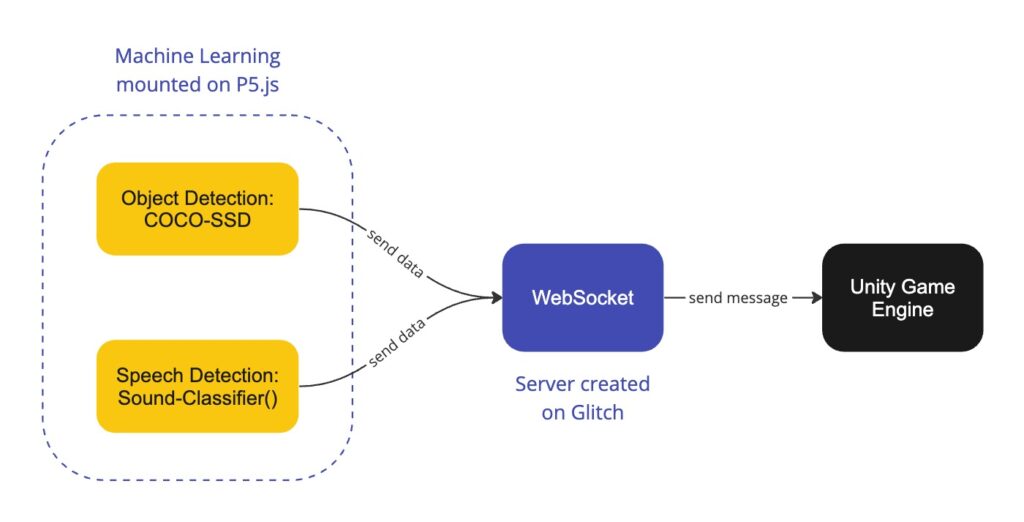

we used ml5’s Sound Classifier and Tensorflow’s COCO-SSD object detection model and mounted them on P5.js. The object detection code was refined to send the message “ball” whenever it sees a tennis ball, through a websocket to the Glitch server. Similarly, the sound classifier was coded to send the message “up”, “go,” and “stop” through the websocket.

One of the major challenges we faced was figuring out how to have P5 communicate with Unity, which uses the C# language.

After many iterations, we finally came to a solution by installing a websocket plugin (Native WebSocket) into Visual Studio Code, in order for the C# code to receive simple string messages from Glitch websocket and publishing them on Unity 3D.

The video on the right shows our early tests connecting websocket messages to specific behaviours in Unity3D.

Watch the demo ↓

This project was submitted in partial fulfillment of an Introduction to Artificial Intelligence course by Prof. Alexis Moore of OCAD University’s Digital Futures graduate program, by Tamika Yamamoto, Shipra Balasubramani, and Yueming Gao.